7 Impactful Insights on AI Proctoring and Exam Security Ethics

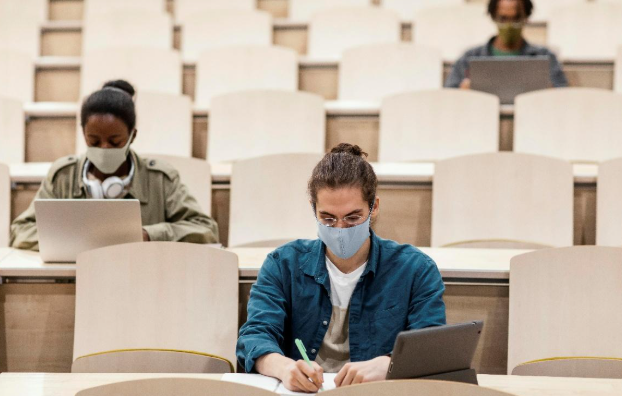

The impact of online learning has made assessments less rigid, and the flexibility of assessments has heightened the fairness, privacy, and surveillance issues associated with assessments. AI proctoring has arrived on the scene to offer some solutions as a digital monitoring platform for students using webcams, microphones, and artificial intelligence. At the outset, it is presented as a foolproof solution to cheating; however, together with the issues embedded in exam security ethics, it presents a much larger question: How far should technology go in the name of fairness?

Advocates of AI Proctoring would argue that AI proctoring strips off integrity by identifying suspicious behaviours, while opponents argue for unfettered privacy violations and bias. Just as the conversation in exam security ethics raises questions about whether monitoring students with cameras and microphones presents an assumed ‘guilty until proven innocent’ approach to assessing assessment integrity, the advocacy opposition to AI proctoring raises questions about the balancing act of scale and privacy in the future of education.

Balancing Fairness and Privacy in Modern Assessments

Exams are not only a way to assess knowledge; maintaining fairness for everyone often comes into direct conflict with privacy. Many AI-driven monitoring systems track eye movements, ambient noise or sounds, or even unusual screen behavior. While this again increases accountability, it also raises the stakes of anxiety by normalizing every movement as a red flag.

As it relates to ethics and evaluation, transparency is critical. Students must have an understanding of what is being collected and used in data, there must be an open understanding with all learners about the data used to assess fairness, otherwise the system could easily cross a line and diminish trust in institutions.

The Scalability Benefit of AI Proctoring

One of the undeniable strengths of AI in education is its scalability. When universities or certification organizations have to supervise thousands of candidates at once, they will invariably fail to ensure supervision. People are expensive and unreliable, while AI tools can deliver a uniform assessment in a sustainable manner over a range of assessments.

This capability provides online assessments to students globally who may not otherwise have access due to geographical limitations or other problems. Accessibility via AI proctoring addresses a logistical barrier but raises additional questions about data storage, algorithm bias, and the loss of the human aspect of someone making a judgment call.

Exam Security Ethics: Beyond Cheating Prevention

When institutions accept digital proctoring, the emphasis is often focused on the prevention of cheating. However, exam security ethics entails much more than prevention of cheating; it involves the fairness, dignity, and inclusiveness of all students. For example, some students with disabilities may find it difficult if facial recognition does not work or if the repeated alarms distract from their focus. Also, students from cultures with different interpretations of behavior – including interpretation of body language or eye contact – will often be judged or misinterpreted by unyielding algorithms.

In this ethical exam scenario, this leads to the question: why should preventing dishonesty mean penalizing honest students unfairly? Ethical exam design must consider inclusivity beyond simply ensuring that policies are enforced.

Transparency and Student Consent

Ethics in digital monitoring depend on consent. Students should also be informed about what data is being collected, how it will be stored, and/or if a third-party company has access. Many universities and colleges publish data governance and policies, but there are many that still do not do so to any extent.

Without transparency, mistrust develops. A learner may feel compelled to accept invasive terms because they need to sit an exam. True consent requires alternatives (like in-person testing or a less invasive verification process) to provide informed consent. Institutions’ transparency creates opportunities to build trust and credibility, and collect data without violating student rights.

AI Bias and the Risk of False Accusations

AI systems are only as unbiased as the data used to train them. If the training is biased, the models can misrepresent certain larger groups of students, potentially at a higher rate than others. For example, dark skin can create difficulties for some facial recognition software, which can lead to punishment.

The long-term implications of a false accusation of cheating can damage students’ reputations or academic work, which makes accountability crucial: Who is accountable if the algorithm makes the error, the software solution provider, the institution, or the proctoring provider? Accountability is a key component in establishing trustworthy digital assessment systems.

Psychological Consequences for Students

With exams already a stressful experience, the fact that students are constantly being watched can add to anxiety. It’s not just their answers, but also their posture, breath, and simple movement based on the monitoring can, in extreme cases, really be harmful to students’ performance and undermine the fairness the system is meant to protect.

Educational institutions need to consider whether the upside of the AI capabilities outweighs any downside to student mental health. They can help reduce anxiety and create an environment where the students feel respected by offering mock tests, stating the rules of monitoring clearly, or providing a method for student appeal.

The Future of Scalable and Ethical Proctoring

The future of online examination may ultimately rely on achieving a balance. AI proctoring is likely to continue to exist, but will have to develop together with the developments related to the ethics of exam security. Some promising solutions include blockchain verification, behavior monitoring that is less intrusive, and hybrid human-AI proctoring, which may lessen ethical risk.

We ultimately want to not only reduce the incidence of dishonesty but also to create a fair, secure, and respectful environment for all learners. Technology should enhance education, not infringe upon and surveil the educational process.

Conclusion: Developing Trust in Digital Assessment

The inquiry about AI proctoring is not if we should use the technology, but how we can use it responsibly. AI has the ability to provide scalability and fairness across huge populations of exams at its best and erode privacy, misinterpret behavior, and distrust students at its worst.

Addressing exam security ethics is not simply a technology problem, but also requires developing transparency, consent, and accountability at every decision point. An AI proctoring system that upholds dignity will always be better than a system based entirely on suspicion. Moreover, when institutional boards design policies with exam security ethics, they create a culture of how students deserve to be treated and students perceive assurance rather than police.

At the end of the day, digital exams are not going away. With ethics built into innovation, educators can utilize AI and ensure trust is not replaced but strengthened and cultivated for future assessments that provide scalability and humanity.

References

[1]A Systematic Review on AI-based Proctoring Systems: Past, Present and Future – Education and Information Technologies

Nigam et al. https://link.springer.com/article/10.1007/s10639-021-10597-x

[2]A Literature Review on ProctorSecure AI: Enhancing Exam Integrity through Artificial Intelligence and Automation

Takawale et al. https://journals.mriindia.com/index.php/itsiteee/article/view/47

FAQ

Q1. What is AI in exam security?

AI in exam security refers to digital systems that monitor tests remotely using tools like webcams, microphones, and algorithms to help ensure fairness in online assessments.

Q2. Why is fairness such a big issue in digital exams?

Because online exams often lack physical supervision, institutions use AI systems to maintain fairness, prevent cheating, and create equal opportunities for all test-takers.

Q3. What are the ethical concerns with AI monitoring in exams?

The main concerns include privacy, transparency, algorithm bias, and the psychological pressure students feel under constant surveillance.

Q4. How does AI balance fairness and privacy in exams?

While AI helps detect suspicious activity, institutions must ensure that data collection is transparent, consent-based, and not overly invasive.

Q5. Can AI monitoring cause anxiety for students?

Yes. Being constantly observed can increase stress and reduce performance, especially if normal movements are flagged as suspicious.

Q6. How scalable is AI for exam monitoring?

AI systems can handle thousands of candidates simultaneously, making global assessments more accessible, though scalability must be balanced with ethics.

Q7. What role does transparency play in digital exam ethics?

Transparency ensures students know what data is being collected, how it is stored, and whether third parties have access. Without it, trust breaks down.

Q8. Who is responsible if AI makes a mistake?

Accountability can be complex—responsibility may lie with the institution, the software provider, or both if false accusations affect students.

Q9. Does AI monitoring impact students with disabilities?

Yes. Some tools fail to recognize diverse needs, such as accessibility adjustments, which can lead to unfair disadvantages.

Q10. Can cultural differences affect how AI evaluates students?

Yes. Behaviors such as eye contact, posture, or gestures may be misread by algorithms, leading to inaccurate conclusions.

Q11. How can institutions reduce ethical risks with AI exams?

They can provide mock tests, clear guidelines, student appeal processes, and hybrid models that combine human oversight with AI.

Q12. What about data privacy in AI-driven exams?

Institutions must ensure secure data storage, limited access, and clear retention policies to protect students’ rights.

Q13. Are there alternatives to invasive monitoring?

Yes. Options like blockchain verification, less intrusive behavior tracking, or in-person alternatives can help balance fairness with privacy.

Q14. How does AI bias affect assessments?

If algorithms are trained on biased data, they may unfairly flag certain groups of students, raising serious equity concerns.

Q15. What is the future of AI in exam security?

The future will likely involve hybrid systems, stronger transparency policies, and technology that prioritizes trust, fairness, and student dignity.

Penned by Riya Singh

Edited by Ragi Gilani, Research Analyst

For any feedback mail us at [email protected]

Transform Your Brand's Engagement with India's Youth

Drive massive brand engagement with 10 million+ college students across 3,000+ premier institutions, both online and offline. EvePaper is India’s leading youth marketing consultancy, connecting brands with the next generation of consumers through innovative, engagement-driven campaigns. Know More.

Mail us at [email protected]