There is a specific sound that fills a university lecture hall when a professor announces a group project, a collective groan.

For most students, group work means logistical headaches, late-night stress, and the issue of freeloaders. We’ve all experienced it; one person does most of the work while others coast along to an easy grade. However, if we look past the frustrations, we see that evaluating and being evaluated by peers is not just an annoying hurdle—it is key to developing professional skills.

In today’s workforce, being graded by a single authority figure is a thing of the past. You are rarely judged only by a boss. You are assessed by your team, your partners, and your clients. Learning to navigate this structure is vital for success in any workplace. When done right, peer assessment is not about pointing fingers at lazy teammates; it fosters accountability and collaboration.

Here’s how to turn peer feedback from a pointless task into a valuable tool for professional growth.

Peer Assessment in Education is essential for improving collaboration, accountability, and professional skills among students.

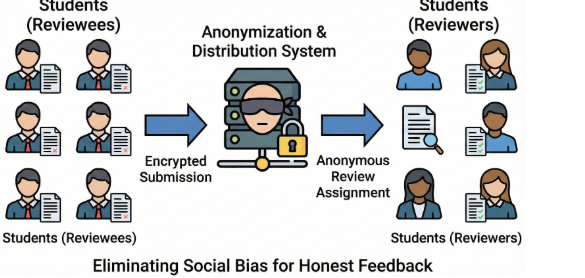

The “Blind” Review: Eliminating Social Bias

The biggest barrier to honest feedback is social hierarchy. Students often fear criticizing friends or upsetting popular classmates. This “Friendship Tax” ruins the quality of feedback. The fix is simple but often overlooked: complete anonymity during assessments.

Research shows that double-blind reviews, where neither the reviewer nor the reviewee knows each other’s identity during grading, yield much more honest and useful feedback. Removing that “friend-enemy” dynamic eliminates the fear of social backlash. Reviewers engage with the work rather than the person, shifting the critique from a personal attack to a professional analysis.

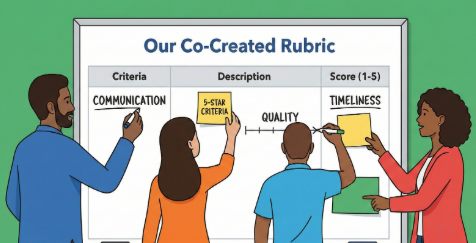

The Co-Created Rubric

One reason students dislike peer assessments is that they feel judged by random standards. Common complaints include, “Why did he give me a 3 out of 5 for communication?”

To address this, involve the group in defining the standards before the project begins. Instead of imposing a rubric, spend the first session creating one together. Ask the group specific questions:

- “What does a 5-star contribution actually look like?”

- “What counts as a failure in communication? Missing one text, or missing a meeting?”

When students help set the criteria for their skills, they are more likely to respect the process. This creates buy-in, making the eventual feedback feel fair rather than punitive.

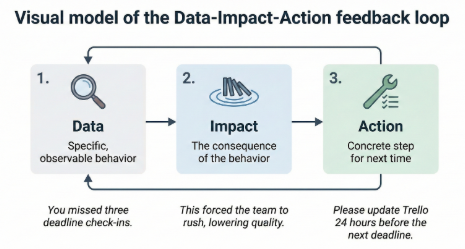

The “Data-Impact-Action” Feedback Model

“Good job” is not useful feedback; it’s a platitude. “You’re lazy” isn’t feedback either; it’s an insult. Neither helps anyone improve. To make peer feedback helpful, students should be trained to use a structured model that focuses on behavior rather than emotions.

The most effective framework is Data-Impact-Action

Data

What specific behavior or outcome did you observe? (e.g., “You missed three deadline check-ins on Slack.”)

Impact

What was the actual result of that behavior on the project? (e.g., “This forced the team to rush the final edit, lowering our overall quality.”)

Action

What specific step should happen next time? (e.g., “Please update the Trello board 24 hours before the next deadline.”)

Teaching this structure turns vague complaints into constructive critiques that guide improvement.

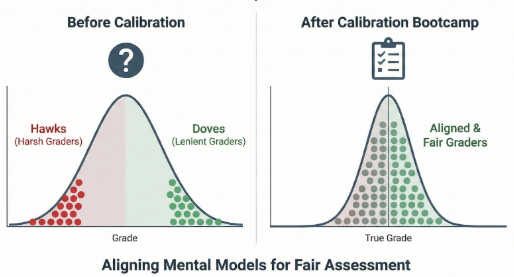

The Calibration “Bootcamp.”

You wouldn’t give a student a scalpel without training, yet we often allow them to grade their peers without any instruction. This leads to bias; some students grade very harshly while others give everyone perfect scores to be nice.

To solve this, run a calibration session before real assessments. Provide a sample piece from a previous year and have everyone grade it individually using the rubric. Then, reveal the true grade and discuss the differences.

This “bootcamp” aligns the group’s understanding. It ensures that a 7 out of 10 means the same thing to everyone, creating a level playing field where grades reflect merit, not personality.

The Iterative Loop (Drafts, Not Finals)

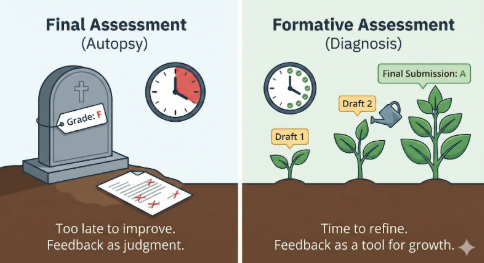

Peer assessment often fails because it comes too late. If feedback only arrives on the last day of the semester, it’s an autopsy, not a diagnosis. The student’s work is already done; you can’t change the grade.

We need to shift toward formative assessment. Schedule peer feedback at 25% and 75% completion points. This gives students a chance to implement feedback before finalizing their work.

The mental boost is huge: feedback becomes a way to improve grades, not just a judgment of a finished product. This iterative process mimics the real-world cycle of drafting, reviewing, and refining.

Meta-Feedback: Grading the Grader

How do you make sure students take the review process seriously? You grade their reviews. Create a system to assess the quality of the feedback itself. Did the reviewer provide clear examples? Was the tone professional? Did they follow the Data-Impact-Action model?

If students know their grade depends on the value of their feedback, the quality of the discussions improves significantly. This “meta-feedback” loop ensures that “Great job, guys!” is no longer acceptable. It teaches the important skill of being a valuable critic.

The “Contribution” Audit

Finally, we need to separate the end product from the process. A group might produce a great report despite having one person do everything, which creates a toxic environment.

Use tools or surveys to audit contributions by focusing on “citizenship behaviors”:

- Attending meetings on time.

- Mediating conflict between members.

- Raising morale during stressful times.

Tools that track contributions help instructors adjust individual grades based on peer feedback, ensuring that freeloaders do not benefit from high performers, and quiet leaders receive the credit they deserve.

The Digital Toolkit: What to Use in 2025

The days of passing around paper forms are over. To implement these strategies successfully, you need platforms that ensure anonymity and streamline distribution.

Kritik

This platform gamifies the process and uses AI to help with grading. It emphasizes higher-order thinking during reviews.

FeedbackFruits

This tool integrates with most Learning Management Systems like Canvas or Brightspace. It offers customizable rubrics and evaluation tools to identify freeloaders and visualize team dynamics.

ComPAIR

This unique tool utilizes “comparative judgment.” Instead of grading a paper against a rubric, students view two assignments side-by-side and decide which is better. This mimics real-world decision-making and is often more intuitive for students than complex point systems.

The Verdict

Peer assessment is not just a way for instructors to save time on grading. It simulates the professional world.

In a design firm, law office, or tech startup, your career path is shaped by your ability to critique others’ work and improve yours based on their input.

By using strategies like the Data-Impact-Action model, blind reviews, and calibration, we move past awkward social dynamics toward genuine skill development.

The goal is not only to create a better project but also to develop better professionals.

References

[1] C. M. Cestone, R. E. Levine, and D. R. Lane, “Peer assessment and evaluation in team‐based learning,” New Directions for Teaching and Learning, vol. 2008, no. 116, pp. 69–78, Winter 2008.

[2] Center for Teaching Innovation, “Peer Assessment,” Cornell University. [Online].

Available: https://teaching.cornell.edu/teaching-resources/assessing-student-learning/peer-assessment

[3] K. Topping, “Peer assessment between students in colleges and universities,” Review of Educational Research, vol. 68, no. 3, pp. 249–276, Fall 1998.

[4] D. Nicol, “From monologue to dialogue: improving written feedback processes in mass higher education,” Assessment & Evaluation in Higher Education, vol. 35, no. 5, pp. 501–517, 2010.

[5] Kritik, “How Peer Assessment Contributes to a Better Learning Experience,” [Online].

Available: https://www.kritik.io/blog-post/how-peer-assessment-contribute-to-a-better-learning-experience.

[6] FeedbackFruits, “Peer Review: Make peer feedback easy, effective, and reliable,” [Online].

Available: https://feedbackfruits.com/solutions/peer-review

FAQs :-

1. What is peer assessment in education?

Peer assessment in education is a process where students evaluate each other’s work using structured criteria. Peer assessment in education helps learners develop critical thinking, accountability, and teamwork skills while improving their understanding of course content.

2. Why is peer assessment in education important?

Peer assessment in education is important because it mirrors real-world professional environments where feedback comes from multiple sources. By engaging in peer assessment in education, students learn to accept constructive criticism and improve their performance collaboratively.

3. How does peer assessment in education benefit students?

Peer assessment in education benefits students by enhancing learning outcomes, fostering collaboration, and developing communication skills. When students participate in peer assessment in education, they gain insights into their own strengths and weaknesses while learning from their peers.

4. What are the common challenges of peer assessment in education?

The common challenges of peer assessment in education include bias, unequal participation, and fear of offending classmates. Proper training and structured rubrics in peer assessment in education can overcome these issues, making feedback more objective and fair.

5. How can teachers implement peer assessment in education effectively?

Teachers can implement peer assessment in education effectively by creating clear rubrics, providing examples, and using digital tools for anonymity. Structured guidance ensures that peer assessment in education becomes a learning opportunity rather than just a grading task.

6. Which tools are useful for peer assessment in education?

Several digital platforms enhance peer assessment in education, such as Kritik, FeedbackFruits, and ComPAIR. These tools streamline the process, maintain anonymity, and help track contributions, making peer assessment in education efficient and transparent.

7. How does peer assessment in education improve professional skills?

Peer assessment in education improves professional skills by teaching students how to give and receive feedback constructively. Engaging in peer assessment in education prepares students for collaborative work environments where continuous evaluation is essential.

8. What is the role of rubrics in peer assessment in education?

Rubrics play a crucial role in peer assessment in education by setting clear expectations and evaluation standards. When students use rubrics during peer assessment in education, they provide objective feedback and understand what constitutes quality work.

9. How can students overcome anxiety in peer assessment in education?

Students can overcome anxiety in peer assessment in education by practicing blind reviews and using structured feedback models. Peer assessment in education becomes less stressful when students focus on behaviors and outcomes rather than personal judgments.

10. How does peer assessment in education affect grades fairly?

Peer assessment in education affects grades fairly by considering individual contributions alongside group performance. By integrating peer assessment in education with meta-feedback and contribution audits, instructors ensure that each student receives recognition for their effort.

Penned by Sanskriti

Edited by Pranjali, Research Analyst

For any feedback mail us at [email protected]

Transform Your Brand's Engagement with India's Youth

Drive massive brand engagement with 10 million+ college students across 3,000+ premier institutions, both online and offline. EvePaper is India’s leading youth marketing consultancy, connecting brands with the next generation of consumers through innovative, engagement-driven campaigns. Know More.

Mail us at [email protected]